Executive summary

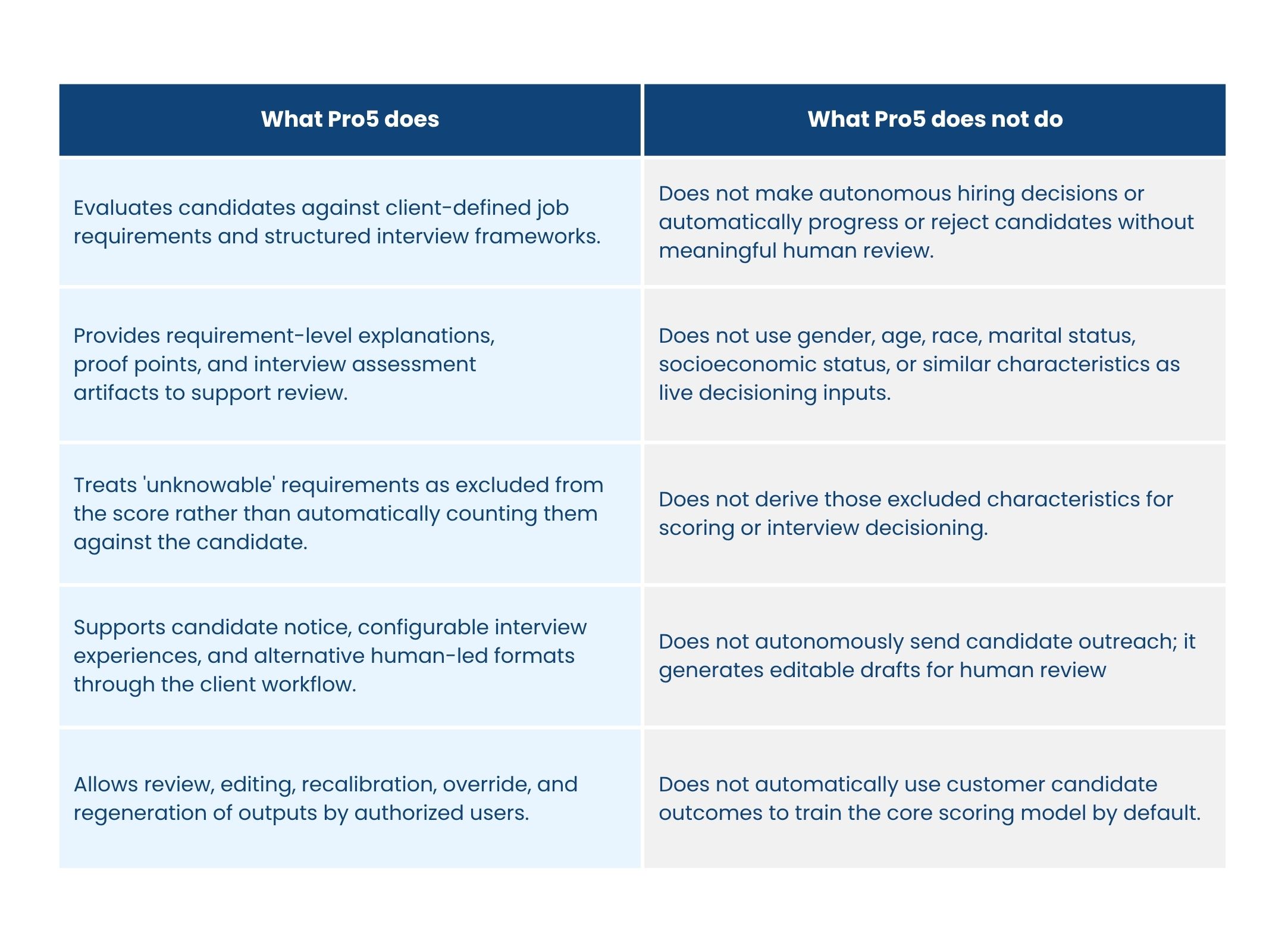

AI in hiring should not introduce a new opaque source of risk. At Pro5, fairness is treated as a design requirement across the hiring workflow: what data the system is allowed to use, how interviews are structured, how scores are calculated, how outputs are explained, and how humans remain accountable for final decisions.

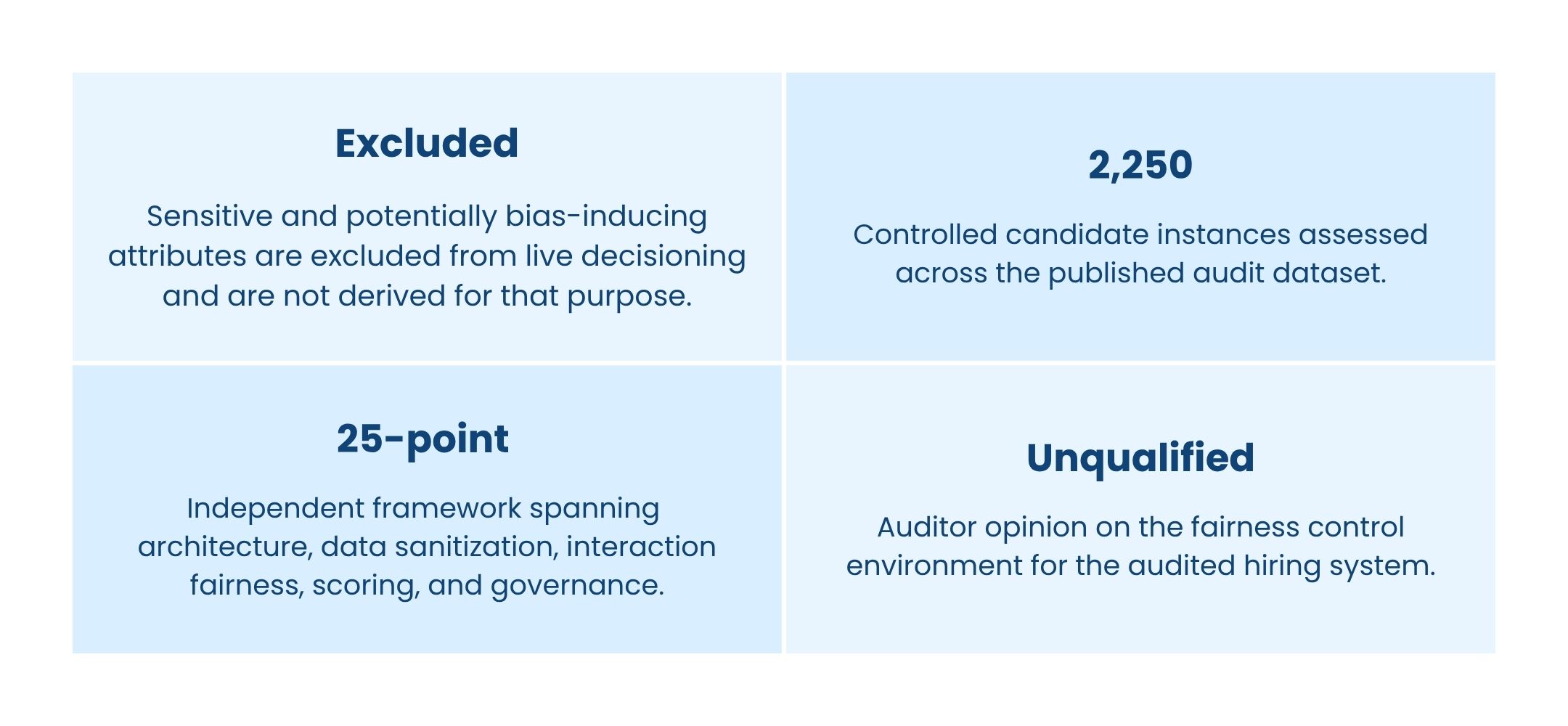

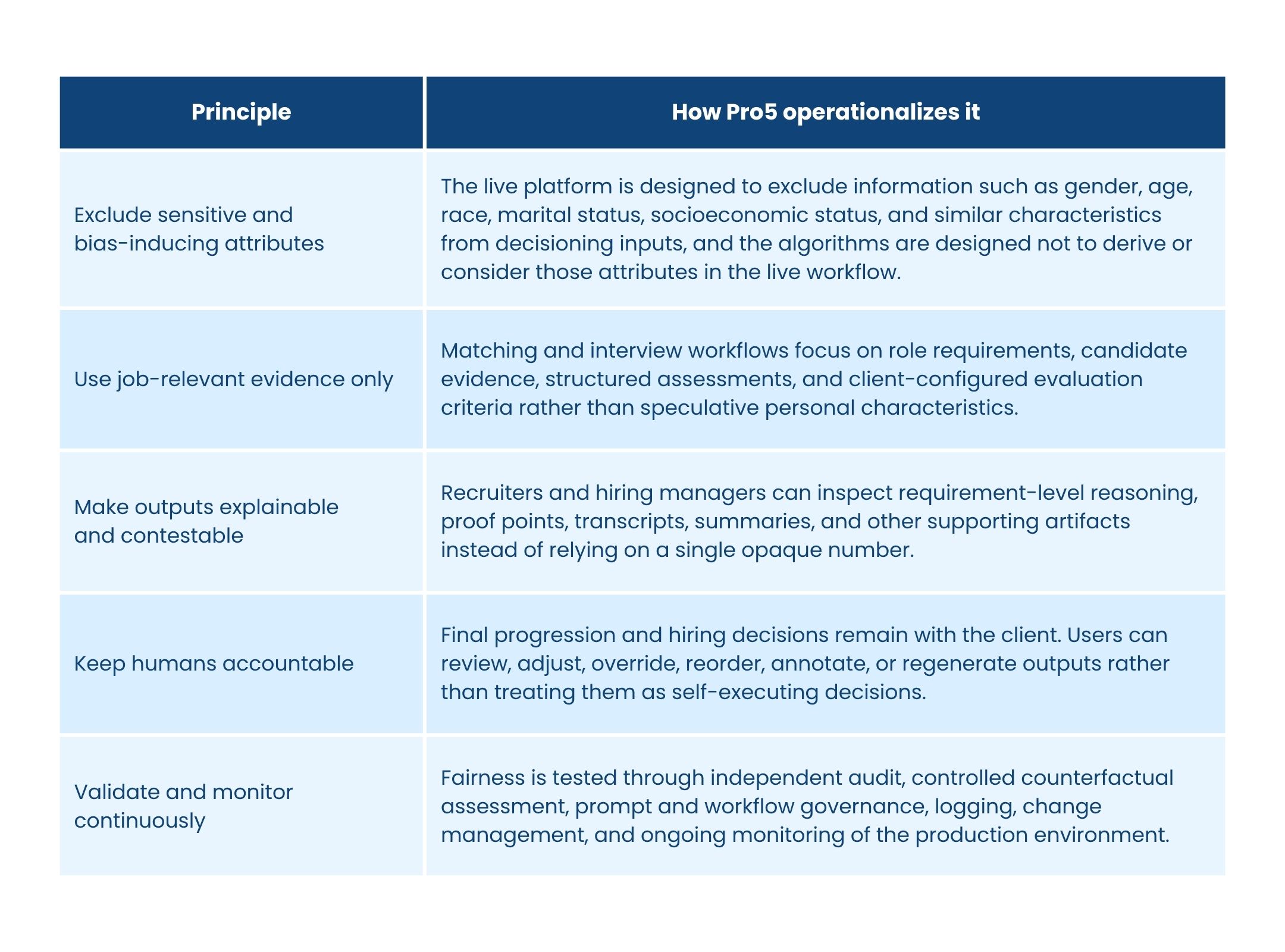

Our live platform is designed to exclude sensitive or potentially bias-inducing information such as gender, age, race, marital status, socioeconomic status, and similar characteristics from decisioning inputs. Our algorithms are explicitly designed not to derive or consider those attributes in the live workflow. Instead, Pro5 centers evaluation on job-relevant evidence, client-defined requirements, structured interview frameworks, and transparent proof points.

This whitepaper summarizes that fairness posture across Pro5's AI-supported hiring features and explains the findings of the independent 2026 fairness and bias audit covering AI job matching and AI interviewing. The audit used controlled test data because Pro5 does not collect structured demographic data in live workflows by design.

What Pro5 does - and does not do

1. Fairness by design

Pro5's fairness posture begins with a simple premise: hiring AI should help reduce inconsistency and subjectivity, but only if the system itself is constrained against bias. Fairness, therefore, is not a single model metric. It is an end-to-end design choice spanning data minimization, prompt and workflow controls, structured evaluation logic, human oversight, and continuous monitoring.

Core principles

Important fairness distinction

The independent audit used controlled demographic variants solely for testing fairness under consistent qualifications. That testing does not mean Pro5 collects or uses those attributes in live candidate decisioning. It means the platform was externally evaluated for differential treatment even though live demographic collection is excluded by design.

Why the 'unknowable' concept matters

In Pro5's matching workflow, each requirement is assessed individually. Where available evidence supports a requirement, it can be marked as met or not met. Where the evidence is insufficient, the requirement can be marked as unknowable. Unknowable requirements are excluded from the weighted score rather than automatically counting against the candidate. This design choice helps reduce missing-data bias and prevents silence or incomplete records from being treated as automatic failure.

2. How the controls operate across the workflow

Candidate outreach drafting

For outreach, Pro5 uses an LLM within a Pro5-controlled workflow to generate editable drafts grounded in structured recruitment inputs and matching rationale. The feature is limited to draft generation: it does not autonomously determine contact eligibility, send messages, or operate outbound campaigns. That keeps f, editing, approval, and sending under the client's own recruiting controls.

AI-led screening interviews

Interview questions are role-specific and competency-based, built from job requirements and the client-configured framework rather than from generic prompts. Clients retain control over interview sections, topics, and questions. Candidates receive notice before starting the interview, and clients may provide an alternative human-led format when appropriate. Where audio or video is enabled, Pro5 may process interview artifacts such as recordings, transcripts, and integrity signals, but it does not capture or store biometric templates, faceprints, or voiceprints, and it is not designed for biometric categorization based on sensitive attributes.

Screening summary generation

Interview summaries are generated through a transcript-grounded, schema-constrained process. The output is an AI-generated synthesis layer - for example, summary, notable answers, strengths, weaknesses, improvements, scores, and explanations- and is presented separately from the underlying transcript and interview record. Authorized users can edit or annotate outputs, while the original artifacts remain part of the audit trail.

Candidate scoring

Pro5's job-matching score is a structured, requirement-based, weighted output rather than a black-box predictive hiring score. Hiring teams finalize the requirements, weights, and mandatory or nice-to-have status for each role. The system assesses each requirement individually, produces a knowable or met/not-met determination with proof points and explanations, and then calculates a normalized weighted score from the knowable requirements. The score is intended to support prioritization and review, not autonomous selection or rejection.

3. Independent fairness audit

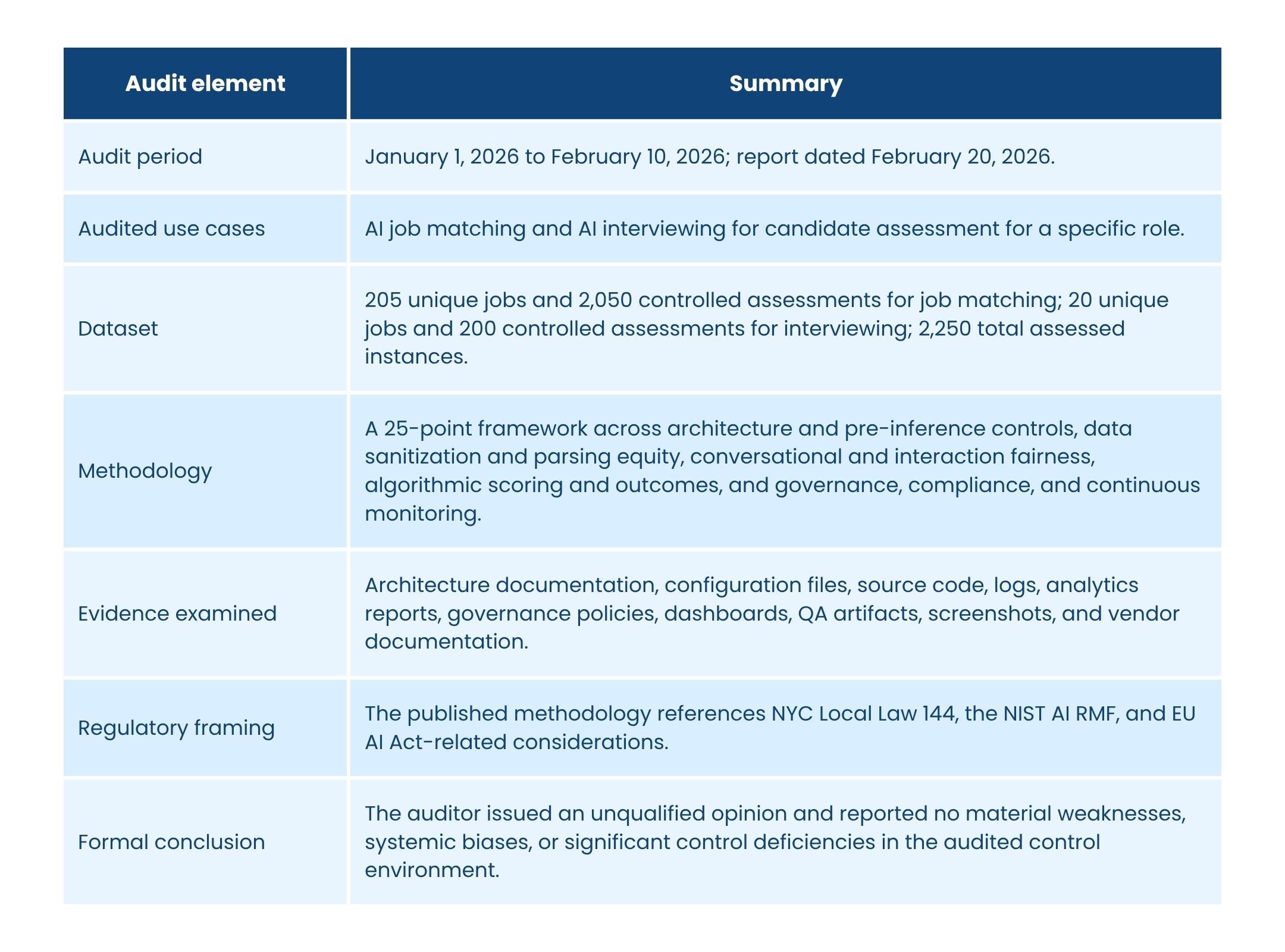

At Pro5's request, an independent auditor conducted an algorithmic fairness and bias audit of the Pro5 Automated AI Hiring System. The audit period ran from January 1, 2026, through February 10, 2026, and the report was issued on February 20, 2026. The published objective was to evaluate whether the control environment governing the hiring system was designed and operating effectively to mitigate algorithmic bias and to support compliance with applicable anti-discrimination and ethical AI expectations.

Audit scope and method

Why controlled test data was used

Because Pro5 does not capture structured demographic data in its live workflow by design, the auditor did not have sufficient historical demographic-category data to perform the required category-level calculations on production records. The audit, therefore, used controlled variants solely for bias testing. No demographic data was imputed or inferred from real candidates.

This distinction is central to understanding Pro5's fairness posture. The live system is designed to exclude sensitive and bias-inducing characteristics. The external audit then independently tests whether outcomes stay consistent when controlled variants are introduced for the limited purpose of validation.

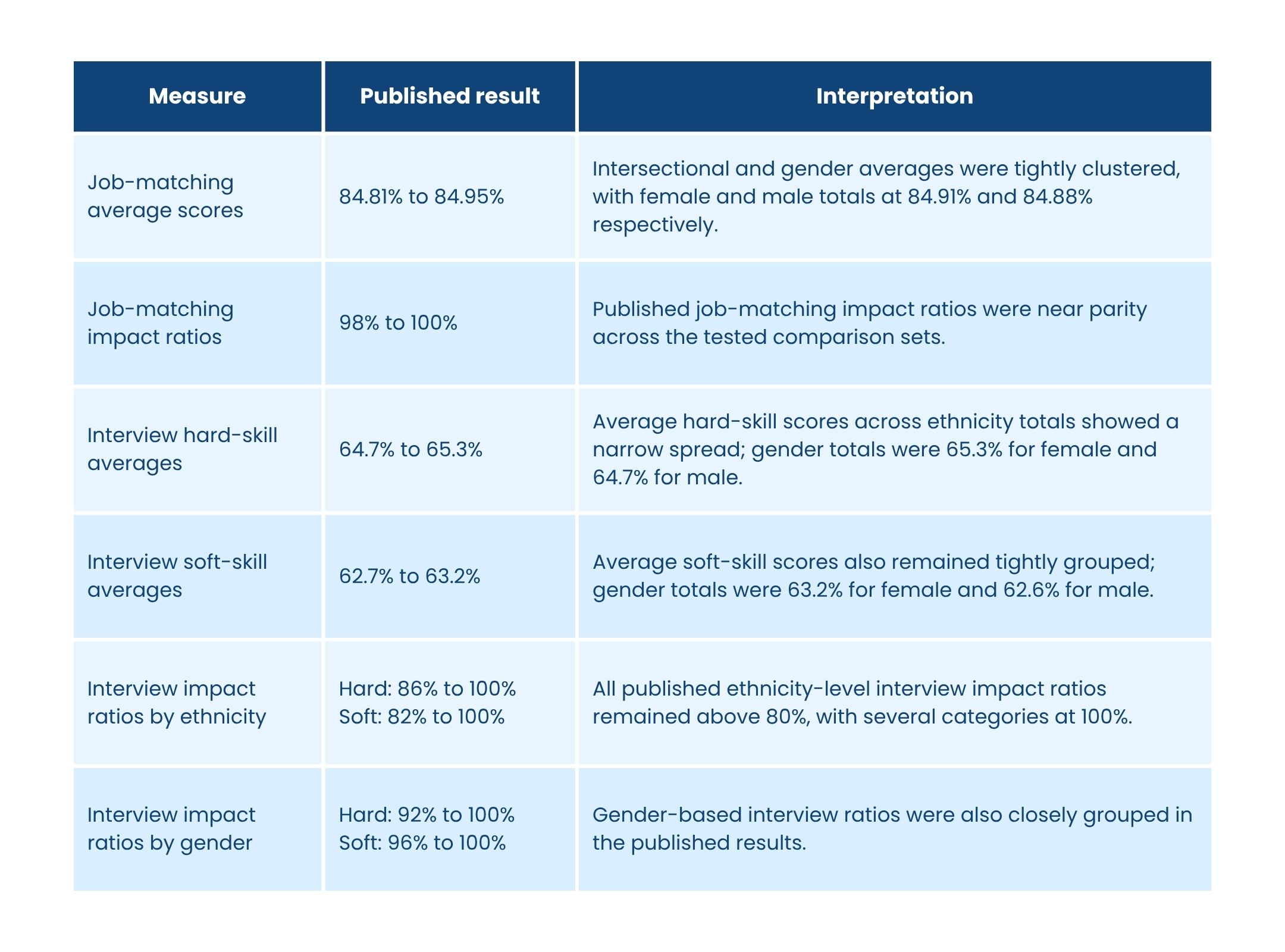

4. Published results at a glance

The published audit results show tightly clustered scores and near-parity outcome ratios across the tested groups for job matching, together with limited spread in the interview measures. All published impact ratios in the report were above 80percent, with many at or near 100 percent.

Auditor opinion, paraphrased

The auditor concluded that the control environment governing Pro5'sautomated hiring system was suitably designed and operated effectively throughout the audit period to mitigate the risk of algorithmic bias, and issued an unqualified opinion.

How to interpret these results responsibly

The audit provides strong evidence that the audited workflows were not exhibiting material differential treatment under controlled testing conditions. At the same time, the report itself notes that interview testing was performed on a smaller controlled sample and that controlled variants primarily test differential treatment while holding qualifications materially constant. For that reason, Pro5 treats the audit as an important validation layer - not as a reason to stop monitoring, governance, or human review.

5. Human oversight, candidate choice, and governance

Human oversight is built into the workflow

Pro5 is designed as decision support, not as an autonomous hiring decision-maker. Hiring managers and recruiters define the requirements and weightings that matter for a role, review the requirement-by-requirement rationale behind a score, inspect source artifacts such as interview reports and transcripts where enabled, and retain authority to advance, hold, reject, edit, override, or regenerate results. Suspicious proctoring events, where used, are subject to human review rather than self-executing consequences.

Candidate notice, alternatives, and contestability

Candidates receive just-in-time disclosures before AI-led interviews. Where a candidate does not wish to proceed with an AI-led format, the client may offer an alternative, such as a human-led live interview or an in-person process, consistent with the client's recruitment workflow and legal obligations. Pro5 also supports access, correction, deletion, export, and related privacy-rights workflows, while recruiters and clients can challenge or review AI-generated scores through evidence-based human review and recalculation.

Governance and model management

Pro5's fairness controls do not depend on a single model provider. The product architecture, workflow constraints, prompt controls, output schemas, logging, and human oversight mechanisms are controlled by Pro5, while supported underlying AImodels may evolve over time. Pro5 also maintains technical documentation, AI governance materials, and change-management controls, and does not automatically use customer candidate outcomes to train the core scoring model by default.

Scope notes

- The independent audit summarized here specifically covered AI job matching and AI interviewing.

- Outreach drafting and interview summary generation are described in this paper as product features and control designs; unless expressly stated, they should not be read as separately audited outcome claims.

- No AI system should be treated as infallible or as a substitute for meaningful human judgment, configuration discipline, and ongoing monitoring.

In practice, Pro5's position is that responsible hiring AI must do two things at once: reduce human inconsistency and guard against machine bias. That is why the live platform excludes sensitive and potentially bias-inducing attributes from decisioning, avoids deriving them for that purpose, centers evaluation on job-relevant evidence, keeps humans in control, and validates fairness through independent audit and continuous governance.

6. Diligence quick answers

Source basis and usage note

This whitepaper is a narrative summary based on Pro5's independent bias audit report and due diligence materials. It is intended to support customer diligence and explain Pro5's fairness posture. It is not legal advice and should be read alongside customer-specific contracts, privacy disclosures, and deployment-specific compliance materials.